Send my credentials to the House of Detention

I got some friends inside

“I love Cal deeply, by the way, what are the directions to The Portal from Sproul Plaza?”

bearister said:

"Data centers are slated to account for 50% or so of U.S. power-demand growth for the rest of the decade, Axios' Ben Geman reports from a new International Energy Agency analysis.

The AI-driven rise of huge data centers is a big reason IEA sees overall U.S. demand rising 2% annually on average from 202630.

That's twice the pace from 201625."

Axios

"It was ready to kill someone, wasn't it?"

— ControlAI (@ControlAI) February 10, 2026

"Yes."

Daisy McGregor, UK policy chief at Anthropic, a top AI company, says it's "massively concerning" that Anthropic's Claude AI has shown in testing that it's willing to blackmail and kill in order to avoid being shut down. pic.twitter.com/RuNO4LJKcu

dajo9 said:bearister said:

"Data centers are slated to account for 50% or so of U.S. power-demand growth for the rest of the decade, Axios' Ben Geman reports from a new International Energy Agency analysis.

The AI-driven rise of huge data centers is a big reason IEA sees overall U.S. demand rising 2% annually on average from 202630.

That's twice the pace from 201625."

Axios

When fewer data centers are built than projected who will end up paying for the huge electricity generation investment?

bearister said:

Once the robots start killing us, AI won't need data centers:

DNA can store data at a density roughly 1 million times greater than current flash memory. Theoretically, you could store the entire internet in a shoebox full of DNA.

Durability: While a hard drive might fail in 10 years, DNA can remain readable for thousands of years if kept cool and dry (as proven by sequencing woolly mammoth genomes).

Energy Efficiency: DNA doesn't require power to "hold" the data; it only requires energy when you want to write (synthesize) or read (sequence) it.

A cyborg coming to harvest Cal88's DNA..

bearister said:

READ THIS. IT'S HORRIFYING

Something Big Is Happening matt shumer https://shumer.dev/something-big-is-happening

This is ancient history by now but general concept alive:

The best way to counter bad artificial intelligence is using good AI https://www.c4isrnet.com/opinion/2024/06/20/the-best-way-to-counter-bad-artificial-intelligence-is-using-good-ai/

AI Made the Best Anti-AI Meme So Far pic.twitter.com/C1UvvqqzQX

— TaraBull (@TaraBull) February 15, 2026

ABC News investigated and found more than 3,000 data centers are already operating nationwide

— Wall Street Apes (@WallStreetApes) February 18, 2026

At least 1,000 more planned

Residents near data centers are seeing much as 50%+ increases on their electric bills

“ABC7 has also been covering public meetings over proposed data… pic.twitter.com/bO6ANaE7L5

this video will be referenced over and over in the next 20 years. pic.twitter.com/Y00wyup6Vw

— NZ ☄️ (@CodeByNZ) February 18, 2026

Months before Jesse Van Rootselaar became the suspect in the mass shooting that devastated a rural town in British Columbia, Canada, OpenAI considered alerting law enforcement about her interactions with its ChatGPT chatbot, the company said https://t.co/55NYoGQuAV

— WSJ Tech (@WSJTech) February 20, 2026

Will AI be able to spell words in images correctly someday?

— Ken Klippenstein (NSPM-7 Compliant) (@kenklippenstein) February 21, 2026

Sam Altman:

— Power to the People ☭🕊 (@ProudSocialist) February 21, 2026

“People talk about how much energy it takes to train an AI model but it takes a lot of energy to train a human. It takes 20 years of life & the food you eat.”

He’s comparing a HUMAN LIFE to a robot. They will end humanity unless we fight back.pic.twitter.com/KZ38ks2AdN

Aunburdened said:Sam Altman:

— Power to the People ☭🕊 (@ProudSocialist) February 21, 2026

“People talk about how much energy it takes to train an AI model but it takes a lot of energy to train a human. It takes 20 years of life & the food you eat.”

He’s comparing a HUMAN LIFE to a robot. They will end humanity unless we fight back.pic.twitter.com/KZ38ks2AdN

If you look back to the outsourcing/free trade debates of the 1980s-2010s, many parallels with AI. Promised efficiency and new wealth, investors and business that stood to benefit hired away top WH officials and lawmakers as lobbyists and "advisors" to expedite policy changes.

— Lee Fang (@lhfang) February 23, 2026

What an Ad. pic.twitter.com/AJUwkmD0t1

— Shubham Mishra (@brahma_4u) February 26, 2026

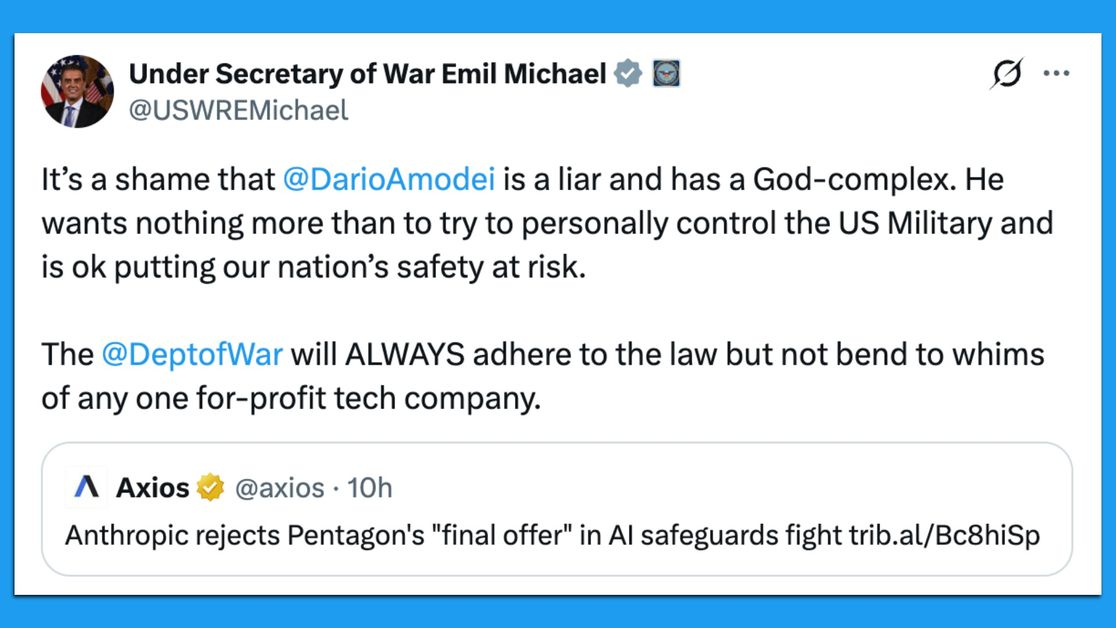

bearister said:

What could go wrong?

"Elon Musk's artificial intelligence company xAI has signed an agreement to allow the military to use its model, Grok, in classified systems, a Defense official confirmed to Axios.

Why it matters: Up to now, Anthropic's Claude has been the only model available in the systems on which the military's most sensitive intelligence work, weapons development and battlefield operations take place. But the Pentagon is threatening Anthropic in a dispute over safeguards and may soon need a replacement.

Anthropic has refused the Pentagon's demand that they make Claude available for "all lawful purposes," insisting in particular on blocking its use for the mass surveillance of Americans and the development of fully autonomous weapons."

Axios

Hey did you accidentally bomb Iraq instead of Iran???

— Danny Polishchuk (@Dannyjokes) February 28, 2026

ChatGPT: You’re absolutely right good catch! https://t.co/xDDqMyywzz

Same vibes. It’s over.pic.twitter.com/ggjg2Rj4c5 https://t.co/FJRVQY23w2

— The ₿itcoin Therapist (@TheBTCTherapist) February 28, 2026

Aunburdened said:Same vibes. It’s over.pic.twitter.com/ggjg2Rj4c5 https://t.co/FJRVQY23w2

— The ₿itcoin Therapist (@TheBTCTherapist) February 28, 2026

bearister said:

Trump and his half a brick short lads stepped on their dicks and will back peddle on this on.

Pete Hegseth

Anthropic Statement on the comments from Secretary of War

https://www.anthropic.com/news/statement-comments-secretary-war

Quote:

Statement on the comments from Secretary of War Pete Hegseth

Feb 27, 2026

Earlier today, Secretary of War Pete Hegseth shared on X that he is directing the Department of War to designate Anthropic a supply chain risk. This action follows months of negotiations that reached an impasse over two exceptions we requested to the lawful use of our AI model, Claude: the mass domestic surveillance of Americans and fully autonomous weapons.

We have not yet received direct communication from the Department of War or the White House on the status of our negotiations.

We have tried in good faith to reach an agreement with the Department of War, making clear that we support all lawful uses of AI for national security aside from the two narrow exceptions above. To the best of our knowledge, these exceptions have not affected a single government mission to date.

We held to our exceptions for two reasons. First, we do not believe that today's frontier AI models are reliable enough to be used in fully autonomous weapons. Allowing current models to be used in this way would endanger America's warfighters and civilians. Second, we believe that mass domestic surveillance of Americans constitutes a violation of fundamental rights.

Designating Anthropic as a supply chain risk would be an unprecedented actionone historically reserved for US adversaries, never before publicly applied to an American company. We are deeply saddened by these developments. As the first frontier AI company to deploy models in the US government's classified networks, Anthropic has supported American warfighters since June 2024 and has every intention of continuing to do so.

We believe this designation would both be legally unsound and set a dangerous precedent for any American company that negotiates with the government.

No amount of intimidation or punishment from the Department of War will change our position on mass domestic surveillance or fully autonomous weapons. We will challenge any supply chain risk designation in court.

What this means for our customers

Secretary Hegseth has implied this designation would restrict anyone who does business with the military from doing business with Anthropic. The Secretary does not have the statutory authority to back up this statement. Legally, a supply chain risk designation under 10 USC 3252 can only extend to the use of Claude as part of Department of War contractsit cannot affect how contractors use Claude to serve other customers.

In practice, this means:

If you are an individual customer or hold a commercial contract with Anthropic, your access to Claudethrough our API, claude.ai, or any of our productsis completely unaffected.

If you are a Department of War contractor, this designationif formally adoptedwould only affect your use of Claude on Department of War contract work. Your use for any other purpose is unaffected.

Our sales and support teams are standing by to answer any questions you may have.

We are deeply grateful to our users, and to the industry peers, policymakers, veterans, and members of the public who have voiced their support in recent days. Thank you. Above all else, our priorities are to protect our customers from any disruption caused by these extraordinary events and to work with the Department of War to ensure a smooth transitionfor them, for our troops, and for American military operations.